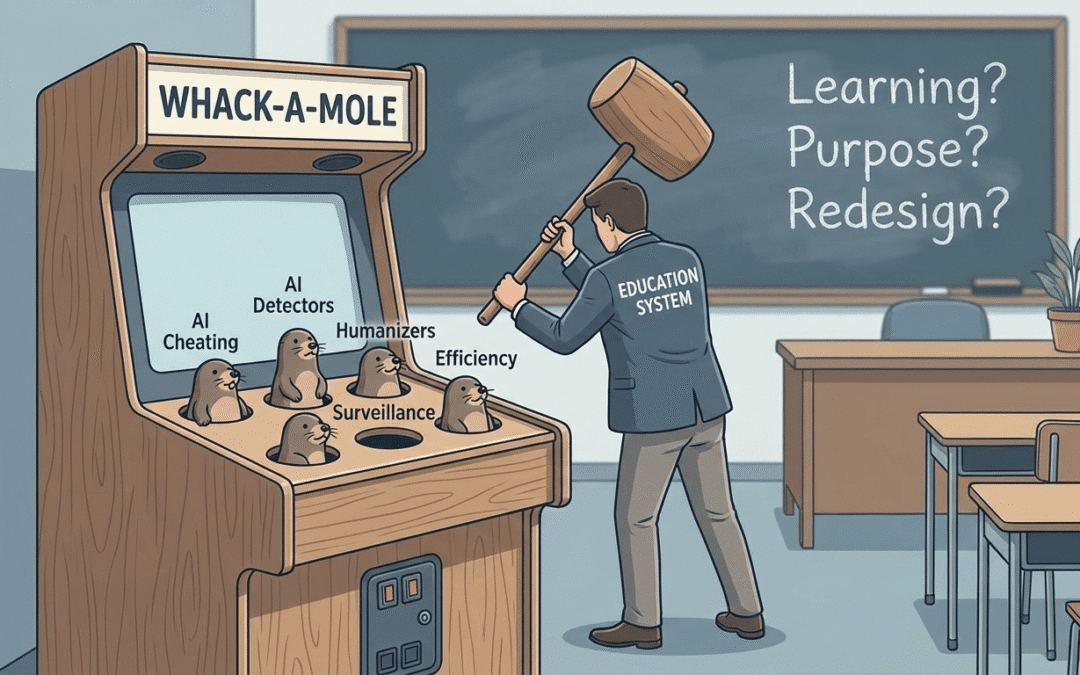

Two articles (EdTech Magazine) and (NBC News) in the same newsletter caught my attention this week. One focused on how AI tools are helping teachers drive efficiency, save time, streamline workflows, and automate tasks. The other highlighted the growing arms race in higher education (though this would apply in K-12 as well) between AI detectors and AI “humanizers,” with students learning to outmaneuver surveillance systems. Placed side by side, they reveal something deeper. We are not witnessing a coherent or sustainable AI strategy in education. We are witnessing a cat-and-mouse game.

On one side:

AI as productivity infrastructure.

On the other:

AI as compliance enforcement.

But in both cases, the conversation centers on efficiency and policing, not on whether learning itself has been redesigned for an AI-rich world. Using historical context, one could reasonably make similar arguments around the implementation of technology as well. If students are learning to “sound human” to avoid detection…If institutions are investing in increasingly sophisticated surveillance tools…If teachers are primarily using AI to move faster within the same structures…Then we have to ask:

Are we adapting learning?

Or are we simply optimizing and defending legacy systems?

Our current status requires much more than tool fluency and policy updates.

It requires us to examine/interrogate:

• What counts as authentic thinking?

• Who defines academic integrity in an AI era? From what disposition?

• Whether our assessments measure learning or just independence from tools.

• And whether surveillance is quietly and definitively replacing trust.

The risk isn’t just that AI is moving too fast. The risk is that our response remains reactive, oscillating between efficiency and enforcement, without addressing purpose, power, and pedagogy. Therefore, the real inflection point isn’t technological, it’s analytical and philosophical.