Many within education are feeling an increasing pressure regarding generative AI. This pressure is likely due to both internal and external factors. As such, educational institutions across a wide spectrum of levels have looked to generative AI detection programs as a means to protect academic integrity (rarely do I see this defined in a contemporary or practical manner). At first glance, this appears to be a logical choice; if students are using generative AI for purposes that may detract from or circumvent their learning, then identifying when they do so could assist with restoring instances of appropriateness within our academic settings.

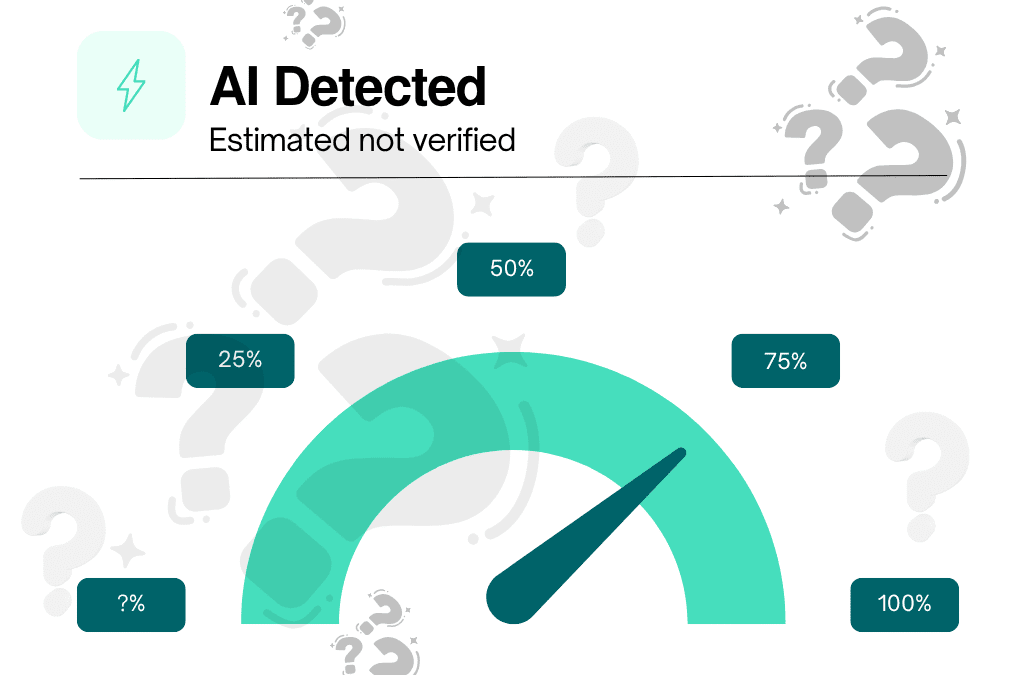

However, the premise of this solution deserves closer scrutiny. The issue isn’t simply whether AI detection software works. It’s that it creates the illusion of certainty where none exists. Even beyond conceptual concerns, research continues to show that these tools produce inconsistent results, including false positives on fully human-written work (Evaluating the accuracy and reliability of AI content detectors in academic contexts). In other words, what is often treated as evidence is, at best, probabilistic guesswork.

The Illusion of Certainty

AI detectors run on probabilities; therefore, they can’t tell who wrote what. Instead, they look for patterns in syntax, style, statistical, and overall structure. Keep in mind that these patterns reflect both how we are taught to write and how we further develop our writing “voice.” Therefore a key thing to keep in mind, similarity ≠ origin. There’s a substantial body of research showing that these systems can not be validated in ways that make sense in terms of their ability to identify the actual source of a piece of writing (E.g., Heads we win, tails you lose: AI detectors in education). In other words, since most people don’t know the origins of the writing they evaluate, they can’t say whether or not a detected result was accurate. And yet, these results are treated as fact. Or at least as proof of a student’s guilt. Not as a springboard for investigation, but as a reason for skepticism. To get around this, various layers are placed on top of each other:

-Looking for alleged “AI markers” in written submissions.

-Evaluating work using several detectors.

-Comparing Students’ submissions to AI-generated content.

-Measuring differences in Students’ writing compared to prior examples of their own work.

Each method works separately and together to give the impression of verification. However, these methods generally tend to support the same basic assumption: the tool being used is correct. That is not a guarantee of correctness; that is a manifestation of confirmation bias masquerading as process.

From Integrity to Gate-keeping

Academic Integrity is based upon rules, fairness, transparency, and trust. However, once educational institutions begin to utilize tools that provide no absolute assurance regarding the occurrence of academic dishonesty, then a paradigmatic shift occurs. A guilty until proven innocent mindset catalyzes. The burden begins to rest with the student instead of the institution. Skepticism precludes evidence. Students are expected to demonstrate their innocence. Furthermore, in some instances, this goes beyond mere expectations; i.e.: Hidden triggers embedded in writing assignments to “catch” Students using AI. Surveillance tools that monitor students’ keystrokes and writing habits. Expectations that Students maintain complete records of their processes. And let’s not forget the growing use of AI Humanizers. These are not neutral procedures. Instead, they turn the learning environment from one focused on inquiry and development into one centered upon compliance and defensiveness. Therefore, we need to consider: Are we protecting academic integrity or enforcing academic gatekeeping?

The False Binary

There is a persistent misconception in many discussions about AI in education that all student work is either human- or AI-generated.

Student work is neither.

Students are doing more than just outsourcing their writing to AI. Students are working alongside AI as well. The lines between drafting, editing, prompting, and revising are opaque. Therefore, attempts to place student writing into a binary framework ignore how writing has evolved.

This predictably creates a nearly impossible standard whereby Students will be judged not only on what they think but also on whether their writing appears sufficiently “human,” based on tools that cannot reliably or consistently define that difference.

The True Problem: Design

When we step back from the current state of affairs, another pattern emerges. Using AI detectors is not simply a matter of technology; it is a response to a larger Issue: a lack of intentionalality in how we design educational learning experiences and Assessments.

When tasks can easily be outsourced, the impulse has been to secure them through detection, monitoring, and restriction. However, that approach assumes that the problem lies in student behavior.

What if the problem lies in the Design itself?

-Assessments prioritizing product over process.

-Assignments lacking authentic context & relevance.

-Evaluation models rewarding completion over thought.

AI did not create these issues. It brought these issues to light and, in some cases, exposed them.

What Our Present Time Really Needs

To be clear, this is not a call for abandoning academic integrity. Quite the contrary, it is a call to rethink how we uphold it. Academic integrity cannot, and should not, be reduced to detection.

It must be embedded in:

-How we design learning experiences.

-The ways we define and measure thinking;

-The way we empower students to take ownership and accountability for their work.

This means moving towards approaches that:

-Make thinking visible without resorting to surveillance.

-Value process, reasoning, and justification.

-Recognize the reality that AI exists regardless of our ability to prevent its use.

-Enable students to engage critically with tools rather than merely avoid them.

A Different Set of Questioning

Discussions around AI in education often center on control:

-How do we stop misuse?

-How do we detect it?

-How do we force compliance?;

While those questions carry a legitimate degree of importance, they only look at part of the picture.

A far more important question might be:

What does it mean to Design learning when AI already exists?

Until we answer that question, no detector will solve the problem. The predictable thinking cycle of technical fixes without addressing underlying problems will continue to inhibit the changes and growth that are needed.